A packaging mistake shipped a half-million lines of TypeScript to the public. No malicious actor, no sophisticated exploit. Just speed, and a gap where a guardrail should have been.

That is the Claude Code incident in one sentence. And if you read it as a story about Anthropic, you are missing the point entirely.

This Is Not About Anthropic#

Anthropic will be fine. This is a blip. They have the engineering depth, the funding, and the brand resilience to absorb it and move on. I do not think this hurts them in the long run.

But the incident is a canary. It is emblematic of where the entire AI sector sits right now. It feels like a Gold Rush out there. Everyone is racing to beat everyone else to market, and Anthropic has been on an absolute tear since the start of this year. At some point, when you are moving that fast, something slips. It was almost inevitable.

What makes it worth examining is the irony. This is a company that sells itself on safety. That puts safety and security at the center of its public identity. And the exposure came not from a sophisticated attack but from a human being moving quickly during a packaging step.

Where the Real Risk Actually Lives#

There is a version of the security conversation that obsesses over external threats. Hackers, state actors, adversarial inputs. That stuff matters. But this incident points somewhere else entirely.

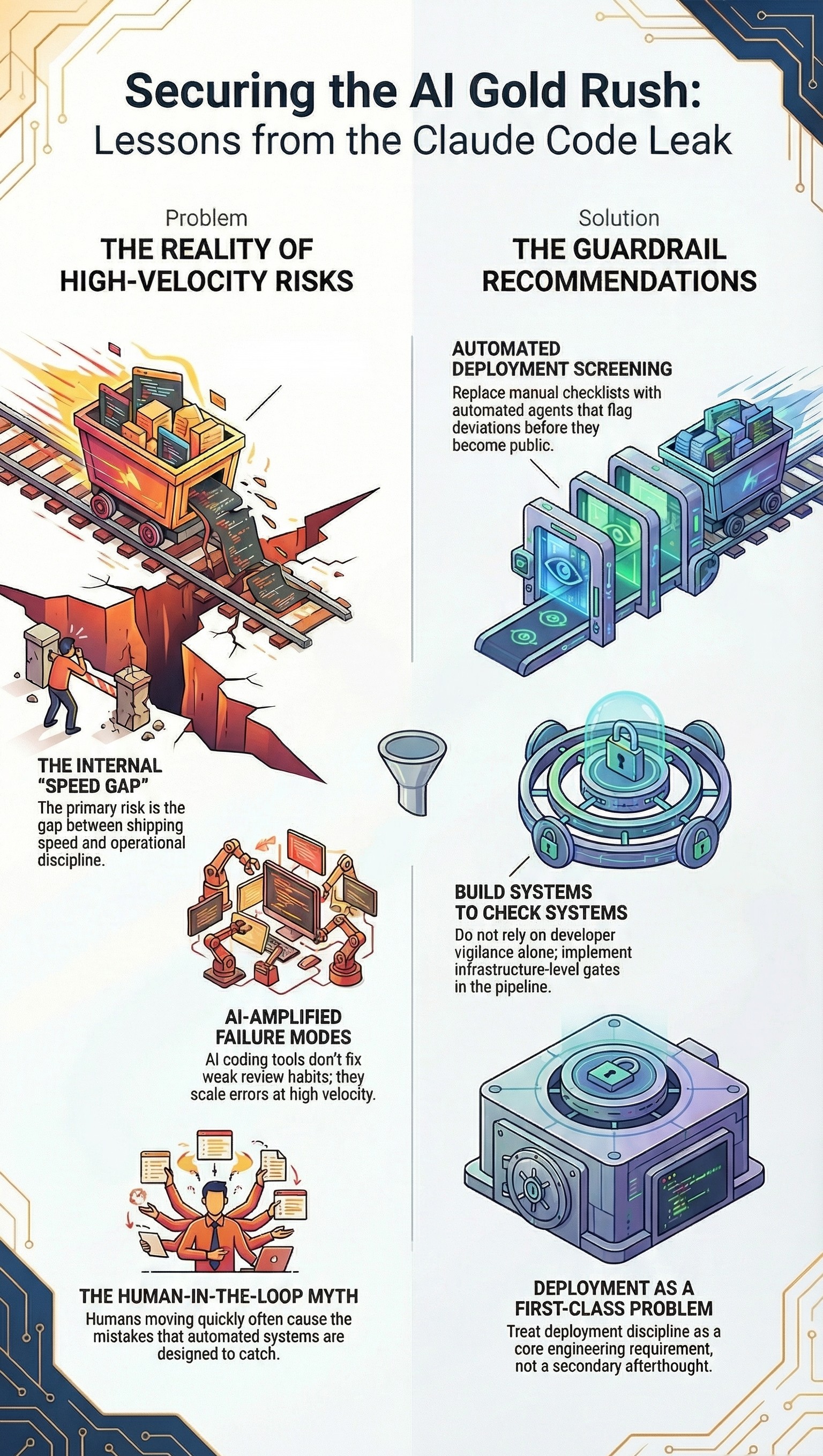

The real attack surface in fast-moving AI shops is internal. It is the gap between how fast a team can ship and how mature their operational discipline actually is. When you are trying to deliver features as fast as possible to meet competitors to market, it comes at a cost. Even when the mistake is made by one person, it reveals that the infrastructure to prevent that kind of human error is not there yet.

One configuration mistake is a bad day. Two configuration-related exposures from the same team inside five days is a signal. It tells you the guardrails are missing, or at minimum, incomplete. There is no gate in the pipeline catching this class of problem before it goes out.

AI Writing AI Is the Part Nobody Has Figured Out Yet#

Here is where it gets genuinely new. The Claude Code codebase, 512,000 lines of TypeScript, was almost certainly built with significant AI assistance. Anthropic's own tools writing Anthropic's own tooling. That loop is now standard in high-velocity AI shops.

The productivity gains are real. But faster at what, exactly? If your team already has weak review habits, AI coding tools do not fix that. They amplify it. You are not reviewing one pull request. You are reviewing ten, all generated at a pace no human process was designed for.

The older failure modes, misconfigured packages, secrets in the wrong place, dependency confusion, do not disappear when AI writes the code. They scale. And then there are the genuinely new failure modes, the ones we cannot fully anticipate yet, that come from AI systems making improvements to other AI systems. We have not dealt with that before. We do not have a playbook for it. We barely know what to look for.

The Human in the Loop Would Not Have Caught This#

The instinct when something like this happens is to say "put a human in the loop." I do not think that is the right call here. A human was in the loop. That is how the mistake happened in the first place.

The more honest answer is that teams shipping at this pace need to invest in protocols and automated guardrails designed specifically to screen every deployment for this class of issue. Not a checklist. Not a reminder in Notion. An agent, trained and scoped to catch exactly these kinds of exposure vectors before anything goes out.

That investment takes time and focus. Both are things that are hard to justify when the competitive pressure is telling you to ship the next feature. That tension is the actual story here. And it is not going to resolve itself. We are going to see more incidents like this. The only real question is which team is next.

The Discipline You Cannot Cut Corners On#

If I am advising a team moving at this speed, the one thing I would not trade away is automated deployment screening. Not a human review gate. Not a policy document. Actual automation that understands what a safe deployment looks like for your specific context and flags deviations before they become public incidents.

The software supply chain conversation has been heating up for years. Open source already makes up most of what modern applications are built on. As AI accelerates the volume of external packages entering your environment, the surface area for this kind of mistake grows with it. You cannot rely on developer vigilance alone to cover that ground. You need systems that check the systems.

The Gold Rush analogy holds here. When everyone is panning as fast as they can, the ones who build the right equipment early end up ahead. Right now most teams are still panning by hand and calling it velocity.

The Playbook for High-Velocity AI#

If you are panning for gold, you need the right equipment. You cannot just dig faster and hope the mountain does not collapse. Surviving this race requires a fundamental shift in how we handle operational maturity to close the gap between shipping speed and safety. Here is what that actually looks like:

First, kill the checklist. Do not rely on a "human in the loop," a policy document, or a reminder in Notion to catch a packaging error. Human error moving at high speeds is exactly what caused this leak in the first place. Instead, you need actual automation. Build or deploy an agent scoped specifically to understand what a safe deployment looks like in your context, and let it flag deviations before they become public incidents.

Second, you need systems that check systems. AI coding tools do not fix weak review habits; they amplify them. When you are reviewing pull requests generated at a pace no human process was designed for, older failure modes like misconfigured packages scale rapidly. Developer vigilance alone cannot cover this expanding surface area. You have to automate the oversight.

Third, stop cutting corners for velocity. The competitive pressure to ship the next feature is intense, but skipping guardrails to get to market is a false economy. Missing gates in your pipeline are a glaring signal that your infrastructure is incomplete. You have to treat deployment discipline as a first-class engineering problem. Build the safety equipment early, or accept that a public exposure is simply a matter of time.

I keep coming back to that word, inevitable. Because that is what it felt like watching this unfold. Not shocking, not a scandal. Just the predictable output of extreme speed meeting immature operational infrastructure. The part that should concern everyone in this industry is not that it happened to Anthropic. It is that it will keep happening, to teams with far less margin for error, until we treat deployment discipline as a first-class engineering problem and not an afterthought we get to eventually.